Why Some Industries Are Hesitant in Fully Embracing AI

%20(8).png)

Why Some Industries Are Hesitant in Fully Embracing AI

Author: Elisabeth Derbyshire

Though tech evangelists find much to celebrate in the artificial intelligence transformation, in many industries, the prognosis is far more muted and not entirely without reason. There’s a reason that executives remain wary of wholeheartedly adopting AI, and it’s not just a matter of technological conservatism.

For regulated industries, such as healthcare and finance, the adoption of AI brings with it real compliance nightmares, data privacy landmines, and liability questions that don’t yet have simple answers. “Move fast and break things” isn’t much of a strategy when patient outcomes or someone’s life savings are at stake.

But here’s the fascinating thing: it may be that the companies most hostile to AI are the ones that need it most. And why it will alter how you think about digital transformation.

Understanding the Root Causes of AI Hesitation

While many chief executives talk up the benefits of AI on their investor calls, the ground truth is quite different. Full AI adoption is dragging in many industries. But why?

A. Financial Barriers and Implementation Costs

The hefty price attached to applying AI makes many businesses shy away. And we’re not just talking about purchasing software, it’s an entire ecosystem of costs:

- Initial investment in AI platforms and tools (often six or seven figures)

- Computing infrastructure upgrades

- Data preparation and cleaning (which can take months)

- Ongoing maintenance and updates

B. Legacy System Integration Challenges

Most established companies aren't building tech stacks from scratch. They're dealing with:

- Decades-old systems that don't "talk" to modern AI platforms

- Data is stuck in silos throughout departments.

- Custom-built software that would need a complete overhaul

C. Regulatory and Compliance Hurdles

Different industries face different regulatory headaches:

Industry

Key Regulatory Challenges

Healthcare

HIPAA, patient data protection

Finance

Anti-money laundering, fraud detection requirements

Insurance

Risk assessment transparency rules

These rules were not drafted with AI in mind. The financial industry, in particular, has a problem with “black box” AI systems that make decisions without evident criteria, which is not a good thing for compliance officers.

D. Technical Expertise Shortages

You can't implement AI without the right people, and that's a major problem right now.

- Data scientists command salaries upwards of $150,000

- There's a lot of competition for AI talent, especially from tech giants.

- The skills gap continues to increase, not decrease.

More than one company attempts to train its current IT professionals to handle their work, but the process of turning a network administrator into a machine learning expert doesn’t just happen overnight. Still others work with consulting firms, but that’s just a kind of outsourcing that can erode internal knowledge.

It's smaller businesses and non-tech industries that are hit hardest by this talent crunch. News flash: when Google is throwing $300K+ deals at AI talent, what’s a regional insurance company supposed to do?

High-Stakes Industries with Cautious AI Adoption

Healthcare: When Human Judgment Remains Critical

The healthcare sector isn’t exactly rushing to pass the scalpel to robots. And can you blame them? When a misdiagnosis can be life or death, a healthy dose of caution only makes sense.

Doctors are still hesitant to fully automate life-and-death decisions. But even as AI shows remarkable progress in pattern recognition in radiology or pathology, the human touch still reigns in complex diagnoses where intuition and experience come into play. A seasoned physician discerns subtle cues—a patient’s hesitation, a slight shift in symptoms, idiosyncrasies of family history—that even the most sophisticated algorithms are programmed to miss.

Regulatory barriers only make the matter more complex. For a good reason, the FDA approval of these tools for use in medical AI is rigorous and lengthy. Healthcare systems are also concerned about liability—if an AI system recommends the wrong treatment, who is at fault?

Legal Sector: Ethical and Accountability Concerns

The legal system works at, well, the speed of legal precedent—that is, slowly. Law firms have turned to AI for things like e-discovery and legal research, but they’re putting on the brakes when it comes to making judgment calls.

The core issue? Accountability. When an algorithm recommends a legal strategy, the attorney remains professionally responsible for the advice. In multiple jurisdictions, bar associations are finding it hard to evolve ethical rules around AI, specifically when it comes to attorney-client privilege and confidentiality.

And legal reasoning so often requires nuanced interpretations of complex situations that don’t neatly boil down to code. A judge’s discretion accumulates over years of figuring out how to manage competing interests and is not easily quantified in algorithms.

Financial Services: Risk Management Complexities

Banks and investment companies are all in on algorithms for trading and tidying up basic customer service issues, but when AI-driven decision-making earns huge profits, they get nervous.

The 2008 financial crisis was a painful lesson for all in the danger of relying too much on models that nobody quite understood. Banks are now dealing with 'explainability' in the age of regulation. When a loan application is denied, customers as well as regulators insist on knowing why, and “because the AI said so” doesn’t pass muster.

AI systems developed using historical data can flounder when faced with unprecedented scenarios, including, for example, a global pandemic. Financial leaders recall how quantitative models crumpled in market crashes, making them appropriately leery of giving up too much control.

Education: The Irreplaceable Human Element

Teaching is so much more than the transmission of information.

The learning that happens the most effectively comes through relationships. Students will perform at their best when teachers appreciate their learning styles, emotions, and personal circumstances. In essence, AI is a valuable tool, but the human element remains irreplaceable in education.

The skills we need now more than ever, critical thinking and creativity, are formed by social interaction, debate, and mentorship. Studies highlight troubling trends: for example, a 39% increase in ADHD diagnoses linked to digital multitasking, a dramatic surge in sleep deprivation due to late-night device use, and widespread reports of technology negatively affecting academic performance and mental health.

In addition, many schools confront practical obstacles: tight budgets, gaps in tech infrastructure, and teachers who need to develop expertise before they can use AI tools effectively.

Airlines: Fight or Flight

In the meantime, what’s the hesitation?

- Safety Concerns: Concerns with AI’s capacity to manage the intricacies and vagueness in aviation, as well as the associated uncertainties. Unlike people, AI systems may have trouble responding to the unexpected, stoking fears of misplaced trust and eventual failures.

- Cybersecurity Risks: AI relies on vast amounts of data, making it susceptible to cybersecurity attacks. Keeping this information encrypted but still useful for AI is a significant challenge.

- Absence of Human Judgment: AI does not have the nuanced judgment or ethical decision-making ability of human pilots and staff, particularly under emergency or uncertain situations. This void prompts the question of whether or not AI can adequately substitute or supplement human tasks.

- Job Displacement: AI replacing humans is a cause for concern for society and the economy.

- Ethical and Privacy Issues: Setting guidelines in using AI to ensure ethical behavior is challenging, blending the consideration of ethics as well as privacy issues, as AI methods rely on personal data, which becomes critical under the stringent rules in regulatory aviation.

- Regulatory and Legal Challenges: Aviation is a few steps behind in terms of aviation laws for AI. The absence of clear standards and regulations hinders the adoption of AI and leaves airlines in limbo.

- Data Management and Talent Shortage: Airlines have a tough time harmonizing large data sets to implement AI. In addition, there is intense competition for AI talent, making it difficult to build and keep skilled in-house AI teams.

- High Implementation Costs and Operational Disruptions: A significant financial investment is required for AI, and its integration poses a risk of disruption to daily IT operations, presenting substantial challenges, particularly for small carriers.

- Passenger Trust: Airlines’ image and profitability can be shaped by the reluctance of passengers to fly in AI-operated flights.

Riyadh Air is taking an "AI-first" approach to redefine the travel experience. The company aims to create highly intelligent, efficient, and personalized journeys for its guests while optimizing internal operations.

Key points about Riyadh Air's AI technology include:

- Riyadh Air has formed strategic partnerships with global AI and data experts, including Artefact and IBM, to develop cutting-edge AI solutions that span its entire business and corporate functions.

- The AI applications focus on building a data analytics platform that enables hyper-personalization of guest experiences, improving guest services through intelligent digital channels, and optimizing flight and ground operations via real-time data insights and predictions.

- The AI solutions allow Riyadh Air to offer highly targeted sales channels for Air and non-Air products.

- Artefact and Riyadh Air have co-developed a strategic AI blueprint focused on agentic AI—specialized AI agents supporting specific business functions—such as business intelligence, guest service automation, dynamic pricing, crew planning, and real-time disruption management.

- A centralized AI orchestrator routes requests to appropriate AI agents, ensuring accurate and context-aware outputs for employees and guests.

- Over 50 AI use cases have been identified and prioritized across commercial, flight operations, guest experience, loyalty, and maintenance domains. Examples include predictive maintenance, flight delay prediction, guest lifetime value modeling, and AI-powered content generation.

- IBM is implementing its watsonx AI platform at Riyadh Air, deploying AI-powered virtual assistants for customer and employee self-service and emphasizing data security, privacy, regulatory compliance, and responsible AI adoption.

- Riyadh Air aims to create an AI-first airline with seamless, personalized digital-first travel experiences, targeting its maiden flight in late 2025 and servicing over 100 destinations by 2030.

- The AI-driven initiatives also include automated policy generation, sentiment analysis of passenger reviews, real-time KPI tracking in a digital boardroom, and marketing content generation with 3X productivity gains.

- Riyadh Air’s AI strategy aligns with Saudi Arabia’s National Aviation and Tourism Strategies to significantly boost visitors to the Kingdom by 2030.

Riyadh Air is harnessing a comprehensive and integrated AI technology approach to redesign air travel through personalization, operational efficiency, and intelligent automation, positioning itself as a pioneer in the future of aviation.

Construction: Cracking the Concrete Code

Other challenges include:

- High Initial Costs and Investment Risks: Implementing AI requires significant upfront spending on digital infrastructure, data systems, and specialized software, which many firms—especially small and medium-sized—find prohibitive.

- Project Uniqueness and Data Limitations: Each construction project is unique, making it difficult for AI to generalize from one project’s data to another. This limits AI’s adaptability and the value of collected data unless AI can handle changing environments effectively.

- Data Quality and Management Challenges: Construction sites generate vast amounts of unstructured and fragmented data. Poor data governance, incomplete or low-quality data, and difficulties in sharing data across stakeholders hinder effective AI use.

- Cybersecurity and Legal Risks: AI systems are vulnerable to cyberattacks, and legal issues arise around transparency, intellectual property, privacy, bias, and accountability. Lack of clear regulations and potential liabilities make companies cautious.

- Skilled Workforce Shortage: There is a shortage of AI professionals with construction domain expertise, and the industry also faces a broader skilled labor shortage. This limits the ability to implement and maintain AI solutions.

- Resistance to Change and Organizational Reluctance: The traditionally conservative construction sector often resists new technologies due to fear of disruption, lack of trust in AI decisions, and unclear understanding of AI’s benefits and capabilities.

- Disconnect Between Planning and Implementation: Unclear understanding of what AI can realistically solve leads to failed projects and mistrust, further slowing adoption.

- Concerns Over Job Loss and Ethical Issues: Automation fears and ethical considerations about AI decision-making contribute to apprehension.

In essence, the construction industry’s slow AI adoption stems from costly investments, data and cybersecurity challenges, workforce and skill gaps, cultural resistance, and regulatory uncertainties—all compounded by the sector’s project complexity and fragmentation. Overcoming these barriers requires better data management, workforce upskilling, leadership buy-in, phased implementation, and clear regulatory frameworks.

The Fear Factor: Addressing Common AI Anxieties

A. Job Displacement Concerns Among Workforces

What is the biggest concern about using AI? Jobs. It's what makes employees worry, and it stops executives from approving automation projects.

2025 surveys indicate a widespread awareness and concern among employees about AI's impact on their skills and job security. This anxiety is a significant driver for individuals to consider upskilling, and for organizations, it highlights a critical need to provide adequate AI training and focus on how AI can augment human capabilities rather than solely replace them.

Still, these fears aren't irrational. A 2025 survey found that nearly half of current workers (47%) view AI as a threat to their jobs. This anxiety is driving a surge in upskilling, with 62% reporting that AI advancements have them considering upskilling or reskilling to remain competitive. Millennials (54%) are most likely to worry about AI posing a threat to their jobs. Another survey found that while many workers find AI helpful for productivity, a top downside mentioned was "job role insecurity" and "losing skills by relying too much on AI."

The Henley Business School (May 2025, referencing UK workers) reported that while 36% of those surveyed expressed worry about being replaced by AI, 61% were not concerned about job losses. It also mentions that 61% feel overwhelmed by the rapid development of AI, which aligns with the "challenged" aspect of your quote.

Professor Keiichi Nakata from Henley Business School added: “Artificial intelligence is something that, when used strategically and responsibly, could be a transformative change in organisations across the UK. “ It has the ability to simplify complex tasks, take away the boring jobs, and enable workers to have more time to focus on the things that really matter.’’

Forward-thinking organizations are strategically dismantling these anxieties by:

- Involving employees in AI implementation decisions

- Creating clear retraining pathways

- What AI can do to ease the tedium of work, rather than just take it away

- Communicating honestly about changing role requirements

B. Risks to Privacy and Security of Data

AI is a data monster. They are fed with big packets of data, which presents serious privacy headaches.

Companies that handle large amounts of private customer data must exercise extreme caution due to the dual risks of external data breaches and internal misuse, both of which can have severe financial and reputational consequences.

GDPR and CCPA Risks:

Regulations like the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) in the U.S. impose strict requirements on how organizations collect, store, process, and share personal data. Non-compliance can result in substantial fines.

For example:

- Under GDPR, penalties can reach up to €20 million or 4% of the company's annual global turnover—whichever is higher.

- CCPA allows fines of up to $7,500 per violation, plus the risk of civil lawsuits by affected customers.

Customer Trust:

A single breach or misuse of data can quickly erode customer confidence, which is difficult to regain. Reputational harm often lasts much longer than the immediate financial penalties.

AI "Black Box" Concerns:

Internally, the use of artificial intelligence and machine learning introduces additional challenges:

- Many algorithms function as "black boxes," generating results without transparency into how those results are derived.

- This opacity makes it hard to explain decisions to customers, auditors, or regulators, which is increasingly demanded by modern data ethics standards.

- If AI systems accidentally misuse or mishandle sensitive data, companies may be unable to clearly demonstrate compliance or appropriate data usage.

Best Practices for Security and Compliance:

- Companies such as Klart AI emphasize that security should be a foundational aspect of their platforms, helping clients maintain data protection and regulatory compliance.

- Transparent AI models, robust data governance frameworks, and thorough security protocols are essential for responsibly leveraging customer data while staying within the bounds of regulations and maintaining trust.

Given the legal, ethical, and business imperatives, proactive data security and transparent AI processes are essential for any data-driven organization in today’s regulatory environment.

C. Ethical Dilemmas in AI Decision-Making

If your algorithm denies someone a loan, who’s to blame? What if it misdiagnoses a medical condition or wrongly pegs someone as a security threat?

These are not questions for the future; they are challenges faced right now as AI begins to tackle more and more important decisions.

This ethical minefield only becomes more treacherous when you factor in:

- Algorithmic fairness could preserve and exacerbate the social biases present.

- Lack of diversity in teams developing AI leads to blind spots.

- Different ethics depending on the country and culture.

- The responsibility vacuum occurs when there is no human in immediate control.

A lot of industries, particularly those with life-or-death implications such as healthcare or the criminal justice system, are proceeding with great caution precisely because of those ethical questions.

D. Loss of Human Control in Critical Processes

Beneath much of the anxiety about AI’s power lies a fundamental fear of losing control over AI systems, particularly as they become embedded in critical infrastructure such as manufacturing, financial markets, healthcare, transportation, and energy. This shift raises several practical problems:

- Skill decay: When humans increasingly cede decision-making to AI, their practical skills weaken due to lack of use.

- System dependence and failure risks: Heavy reliance on AI systems can lead to vulnerabilities when these systems fail unexpectedly or inexplicably.

- Difficulty in intervention: Automated processes may fail in ways that are difficult for humans to detect or intervene in promptly.

- Diminishing institutional memory: Knowledge and processes embedded within AI systems become "locked in," making it harder to understand or reverse AI-driven decisions or adapt when systems change.

These concerns are especially acute in critical fields where failure can lead to deadly consequences, not merely financial loss.

Challenges and risks of AI in critical infrastructure include:

- Cybersecurity vulnerabilities and attacks: AI systems increase the cyberattack surface and may be targets of data poisoning, model poisoning, or system compromise, resulting in a significant impact on mission-critical functions.

- Difficulty maintaining human oversight and explainability: AI must provide suggestions and decisions that are valid, explainable, and understandable in real time to enable effective human intervention—for example, a pilot needs to grasp why an AI suggests a specific maneuver quickly.

- Decaying human skills and distancing from tasks: As AI automates more functions, operators' skills atrophy, reducing their capacity to manage or troubleshoot problems when systems fail.

- Complex, poorly understood system behaviors: Early deployment of AI-driven subsystems may occur before limitations are fully understood, increasing the risks of catastrophic failure in complex, interconnected infrastructure.

- Uneven resource capability across organizations: Smaller or less-resourced critical infrastructure providers struggle to adopt and manage AI safely, heightening systemic risk.

Mitigation strategies recommended include:

- Maintaining strong cybersecurity hygiene and continuous monitoring of AI systems embedded in critical infrastructure.

- Ensuring ongoing human oversight and clear, real-time explainability of AI decisions to keep humans “in the loop” effectively.

- Developing organizational cultures of AI risk management, including governance, risk mapping, measurement, and prioritized risk action plans.

- Supporting less-resourced organizations to build capacity for AI adoption and risk management.

- Learning from past technology integrations (e.g., network connectivity) to anticipate attack vectors and system vulnerabilities.

- Developing trusted vendors and data provenance frameworks to protect against malicious influence and improve reliability.

The question "What if something goes wrong?" remains pressing due to the high stakes—especially in healthcare, transportation, and energy sectors—where AI failures can have dire physical consequences.

In summary, the fear of losing control over AI in critical infrastructure is well-founded due to vulnerabilities in system design, complexity, and human factors. However, thorough risk assessment, continued human oversight, stringent cybersecurity, explainability, and supported organizational adoption can help mitigate these fears and harness AI benefits safely.

Failed AI Implementations: Learning from Mistakes

A. High-Profile AI Project Failures and Their Consequences

The AI landscape is strewn with costly failures, which can make business leaders hesitant to join the AI bandwagon. Remember IBM's Watson Health? Despite spending billions on the healthcare AI solution, IBM dumped it in 2022 at pennies on the dollar. The issue wasn’t the technology but rather unreasonable expectations and overselling about what AI could accomplish in complex, messy medical situations.

Another example is Microsoft’s Tay chatbot, which became racist within 24 hours of its release in 2016. That P.R. disaster still haunts discussions of AI ethics today.

Ripple Effects of AI Failures

- Financial Losses: Companies can face steep costs from service outages, regulatory fines, and loss of business or investment. Stock prices often drop sharply after widely publicized AI errors, and entire sectors—like transportation or healthcare—may suffer knock-on financial setbacks if public trust is shaken.

- Privacy Violations: Major AI failures sometimes expose personal or proprietary data. A malfunctioning AI-driven security system might leak sensitive information, leading to identity theft, blackmail, or the loss of intellectual property.

- Job Losses: Rapid, unplanned AI deployments or dramatic failures can both disrupt job markets. Employees may be let go as a crisis response, or new regulatory pressures could force industries to downsize, delay hiring, or transition jobs away from automation.

- Mental Health Effects: People directly affected by AI failures—such as patients misdiagnosed by a healthcare AI or drivers harmed in autonomous vehicle accidents—may experience anxiety, loss of trust, and psychological distress. Even employees in affected sectors can report increased job insecurity, burnout, and stress.

- Legal Accountability: High-profile failures often trigger lawsuits, regulatory actions, or class-action litigation. Companies and their leaders can face criminal or civil penalties, and new legislation may arise to address perceived gaps in oversight or consumer protection.

B. Common Implementation Pitfalls to Avoid

Mistake

Description

Lack of Clear Business Objectives

Too many companies kick off AI initiatives because of hype or competitive pressure, not because they have clear, measurable business goals. While the technologies are very interesting, they don't drive real business value or ROI.

Misalignment Between Business and Technical Teams

They misdiagnose problems, force solutions, and have unrealistic expectations.

Many ambitious AI projects have failed because the people in charge did not understand some basic things. While company leaders pursue the potential of AI, they often implement technologies without a clear plan, turning potentially revolutionary tools into expensive decorations that don't actually solve anything.

Poor Data Quality and Management

AI systems need high-quality, relevant data. Many projects fail because they don't have enough good data, don't handle data well, or can't get the data they need.

Overemphasis on Technology

Unrealistic Expectations & Change Management

Overhyped promises where AI is perceived as a quick fix or magic bullet, which leads to unrealistic expectations about its capabilities and timelines.

AI is powerful, but it’s not magic.

C. The True Cost of Rushed AI Adoption

The hidden costs of half-baked AI implementation make the visible price tag look tiny by comparison. Beyond the obvious technology investment, failed AI initiatives create organizational trauma that lingers for years.

When General Electric rushed AI adoption across their industrial divisions without proper planning, they didn't just waste $62 million in direct costs. They created a corporate culture resistant to future innovation attempts—a cost impossible to quantify but devastating to long-term competitiveness.

Beyond money, rushed AI adoption breaks trust. Employees who experience a failed AI implementation are 3x more likely to resist future digital transformation initiatives. Customers subjected to glitchy AI interactions take their business elsewhere 78% of the time.

This explains why smart companies are taking a measured approach. They've seen too many competitors burn millions on AI mirages while neglecting the foundational work needed for success.

Bridging the AI Confidence Gap

Building Trustworthy AI Systems

The trust issue is the elephant in the room when it comes to AI adoption. Companies aren't just worried about functionality—they're concerned about reliability and consistency.

Trust isn't built overnight. It comes from AI systems that consistently deliver accurate results under real-world conditions. Think about healthcare: doctors need to know an AI diagnostic tool won't miss critical symptoms 99.9% of the time, not just in controlled tests.

What's working? Companies that have successfully built trust focus on:

- Rigorous validation against diverse datasets

- Clear performance metrics that matter to end-users

- Regular audits by third parties

- Documented safety protocols

Hybrid Approaches: Augmenting Rather Than Replacing

The "humans vs. machines" narrative misses the point completely. The most successful AI implementations don't replace people—they make them better.

AI-supported breast screening detected 29% more cases of cancer compared with traditional screening. More invasive cancers were also clearly detected at an early stage using AI. That's not replacement—that's supercharging human capability.

Smart companies are

- Keeping humans in critical decision loops.

- Using AI to handle routine tasks so people can focus on complex problems.

- Creating interfaces that make AI recommendations transparent and adjustable.

- Training teams on when to trust AI and when to question it.

Industry-Specific AI Solutions That Address Unique Concerns

Generic AI doesn't cut it for specialized industries. Legal firms don't want general language models—they need systems that understand case law and precedent.

Manufacturing, healthcare, and finance—each has distinct requirements, regulations, and risk profiles that demand tailored approaches.

Success stories come from companies that:

- Customize models with industry-specific data.

- Design workflows that respect existing processes.

- Address regulatory requirements from day one.

- Solve specific pain points rather than forcing technology where it doesn't fit.

Transparent AI Development and Deployment Practices

Companies hesitant about AI adoption often cite the inability to understand how decisions are made.

Transparency means:

- Explainable AI that can justify its recommendations.

- Documentation of training data sources and potential biases.

- Clear policies on data usage and privacy.

- Regular reporting on performance and limitations.

The EU's GDPR includes a "right to explanation" for automated decisions. Smart companies aren't waiting for regulation—they're building transparency into their AI from the ground up.

Future Outlook: The Path to Responsible AI Integration

Evolving Regulatory Frameworks

The Wild West days of AI are coming to an end. Governments worldwide are finally catching up, creating guardrails that both protect people and give businesses clearer directions. As of mid-2025, we're seeing a patchwork of approaches:

Meanwhile, the US has taken a sector-by-sector approach, with financial regulators requiring explainability standards for lending algorithms and healthcare agencies demanding rigorous testing protocols.

What does this mean for hesitant industries? The regulatory clarity helps. Companies that were sitting on the fence now have concrete compliance targets rather than vague fears about what might become illegal later.

Next-Generation AI with Better Explainability

The "black box" problem has haunted AI adoption from day one. But the newest models are changing the game entirely.

The 2025 generation of machine learning systems now comes with built-in explanation mechanisms. These tools can show their work, highlighting exactly which factors influenced a particular decision and by how much.

Think about what this means for healthcare or legal applications. Doctors can now see precisely why an AI suggested a particular diagnosis. Lawyers can understand the precedents and reasoning behind AI-generated legal advice.

This isn't just technical window-dressing. It's fundamentally changing how professionals view AI:

Old AI Perception

Next-Gen AI Reality

Black box decisions

Transparent reasoning

Replaces human judgment

Augments expert analysis

Unexplainable results

Documented decision paths

Collaborative Industry Standards for AI Implementation

This collaborative approach is cutting through the fear. When your entire industry agrees on standards, the risk of being a first mover drops dramatically.

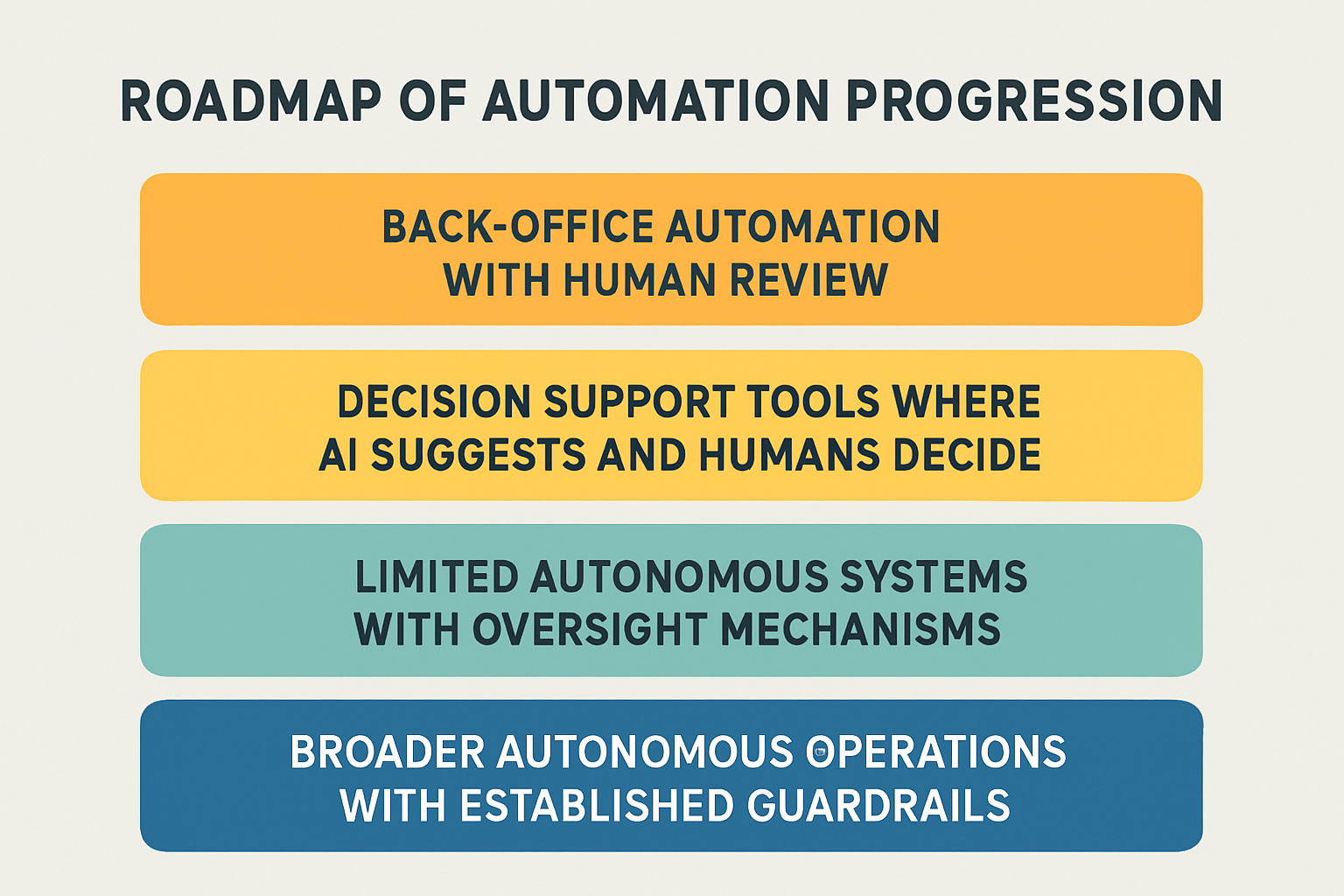

Strategic Roadmapping for Hesitant Sectors

The most resistant industries are finding their way forward through a strategic-like approach.

Instead of the all-or-nothing approach that dominated early AI discussions, companies are now following graduated implementation plans. These typically begin with narrow, non-critical applications and expand only after successful validation.

A typical roadmap now looks like

This measured approach is winning over skeptics in legal, healthcare, and financial services sectors that couldn't afford to "move fast and break things."

The path forward isn't about blind adoption or stubborn resistance. It's about thoughtful integration that respects both technological potential and legitimate concerns.

Embracing AI with Caution and Confidence

The journey toward AI adoption varies significantly across industries, with many high-stakes sectors understandably approaching this technological revolution with caution. As we've explored, this hesitation stems from legitimate concerns about reliability, ethics, and previous implementation failures. Organizations in the healthcare, finance, and legal sectors face unique challenges that require thoughtful approaches to AI integration, balancing innovation with their responsibility to stakeholders. By acknowledging these fears and learning from past mistakes, companies can develop more effective strategies for responsible AI adoption.

Ready to build your own AI agents?

Try the Klart AI for Free and start exploring how an enterprise-grade agent operating system can transform your organisation.

Key Questions & Answers

Why are regulated industries like healthcare and finance hesitant to adopt AI?

Regulated industries face unique challenges when adopting AI, including strict compliance requirements, data privacy concerns, and liability issues. In healthcare, HIPAA regulations and patient safety concerns make full AI automation risky, while financial services must comply with anti-money laundering laws and provide explainable decisions for loan approvals. These industries also struggle with "black box" AI systems that make decisions without clear reasoning, which doesn't meet regulatory transparency requirements. Additionally, the high stakes involved, where AI errors could affect patient outcomes or financial security, make these sectors naturally more cautious about wholesale AI adoption.

What are the main barriers preventing companies from implementing AI successfully?

The primary barriers to successful AI implementation include financial constraints (with initial investments often reaching six or seven figures), legacy system integration challenges, and technical expertise shortages. Many established companies struggle with decades-old systems that don't integrate well with modern AI platforms, while data remains siloed across departments. The talent shortage is particularly acute, with data scientists commanding salaries upwards of $150,000 and intense competition from tech giants offering $300 K+ packages. Additionally, poor data quality, unrealistic expectations, and lack of clear business objectives contribute to the 80% failure rate of AI projects.

How can companies overcome AI adoption fears and build trust in AI systems?

Companies can build trust in AI systems by focusing on transparency, gradual implementation, and hybrid approaches that augment rather than replace human capabilities. Key strategies include developing explainable AI that can justify its recommendations, maintaining rigorous validation against diverse datasets, and keeping humans in critical decision loops. Successful organizations start with low-risk, high-impact use cases before scaling to more critical applications. They also invest in employee training, create clear retraining pathways, and communicate honestly about changing role requirements. Building industry-specific solutions that address unique regulatory concerns and following collaborative industry standards further helps overcome adoption barriers.

What is the pricing of Klart AI?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Is Klart AI currently in beta, or public release?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Do you offer any discounts or special pricing for nonprofits?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Can I invite my team members to my Klart AI account?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

How can I create a Klart AI account?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Is Klart AI currently in beta, or public release?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Do you offer any discounts or special pricing for nonprofits?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

Can I invite my team members to my Klart AI account?

Lorem ipsum dolor sit amet, consectetur adipiscing elit id venenatis pretium risus euismod dictum egestas orci netus feugiat ut egestas ut sagittis tincidunt phasellus elit etiam cursus orci in. Id sed montes.

%20(1).png)